As Waymo in the US and Baidu's Apollo Go in China expand robotaxi services on public roads, South Korea's autonomous-driving industry is under pressure to find a viable route of its own.

Tesla has entered what analysts describe as a "high-investment cycle" in 2026, marking a pivotal shift in the company's strategic priorities from pure electric-vehicle expansion toward artificial intelligence, robotics, and semiconductor self-sufficiency.

As the autonomous driving industry pushes toward "eyes-off" highway driving, the race is increasingly shifting from electric vehicles to perception systems capable of operating safely in the real world.

After a widespread malfunction involving Baidu's Apollo Go robotaxi service in Wuhan left passengers stranded and disrupted traffic, Chinese authorities have reportedly suspended the issuance of new autonomous driving permits. It marks at least the second time regulators have halted approvals following an incident linked to Baidu.

Tesla has quietly taken a significant step deeper into artificial intelligence (AI), disclosing a US$2 billion acquisition of an unnamed AI hardware company in a single sentence buried in its latest regulatory filing.

As advances in artificial intelligence (AI) accelerate, the global auto industry is transforming any in its history. Jheng-Jian Wang, chairman of Taiwan's Automotive Research & Testing Center (ARTC), said the car of the future will no longer be merely a means of transportation, but a "mobile living space" capable of reasoning and decision-making. At the center of this shift, he said, are two technologies: the smart cockpit and end-to-end AI driving systems.

While much of the world's attention remains fixed on robotaxis navigating open roads, David Shen, chief executive of Turing Drive, argues that the true commercial breakthrough for autonomous driving may lie elsewhere, in what he calls "specialized environments," such as factories, ports, and rural regions.

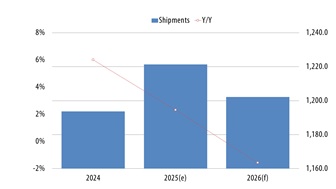

The annual "360°MOBILITY Mega Shows," a major gathering for the auto parts and mobility industry, opens on the 14th, drawing heightened attention to the growing role of Taiwan's suppliers in next-generation automotive technology. As software-defined vehicles (SDVs) emerge as a central industry direction, the share of automotive semiconductors and software in vehicle development is rising rapidly, according to a DIGITIMES Research report.