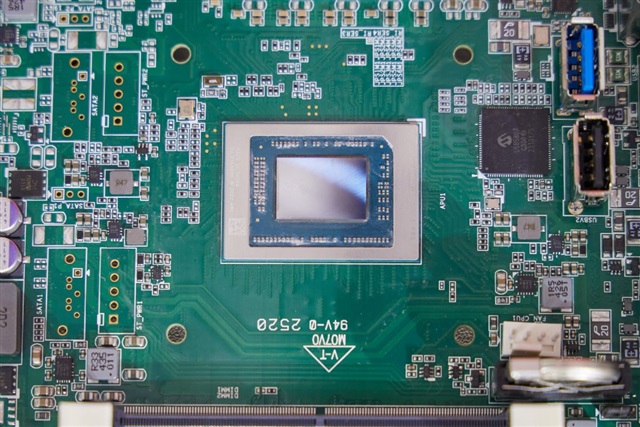

Google recently announced an expanded collaboration with Intel to increase the deployment of Xeon processors in its cloud AI data centers. This move reflects the rapidly growing demand for CPUs in AI infrastructure, prompting Google to secure supply chains...

The article requires paid subscription.

Subscribe Now