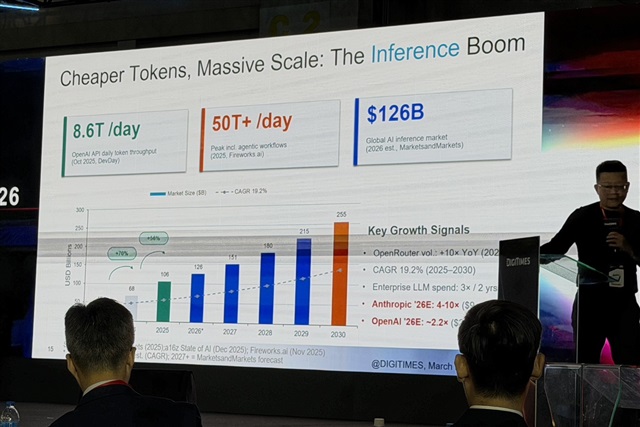

Artificial intelligence is entering a new phase in which inference, rather than training, is becoming the dominant driver of computing demand, as rising costs and memory constraints begin to reshape AI infrastructure,...

The article requires paid subscription. Subscribe Now