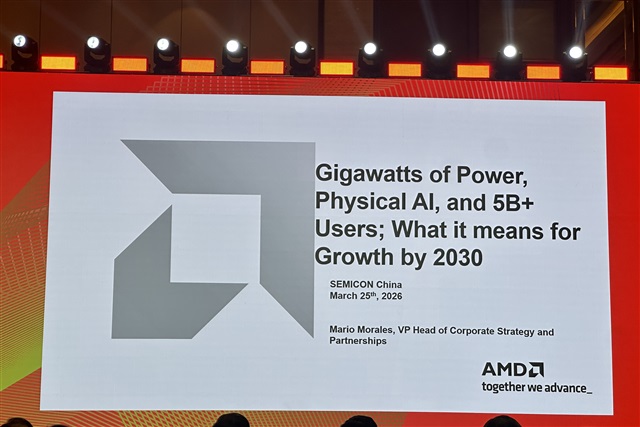

At SEMICON China 2026, AMD VP and Head of Corporate Strategy and Partnerships Mario Morales delivered a stark warning: while the AI market is rapidly expanding toward a projected US$1.7 trillion valuation, the ultimate constraint may not be silicon...

The article requires paid subscription. Subscribe Now